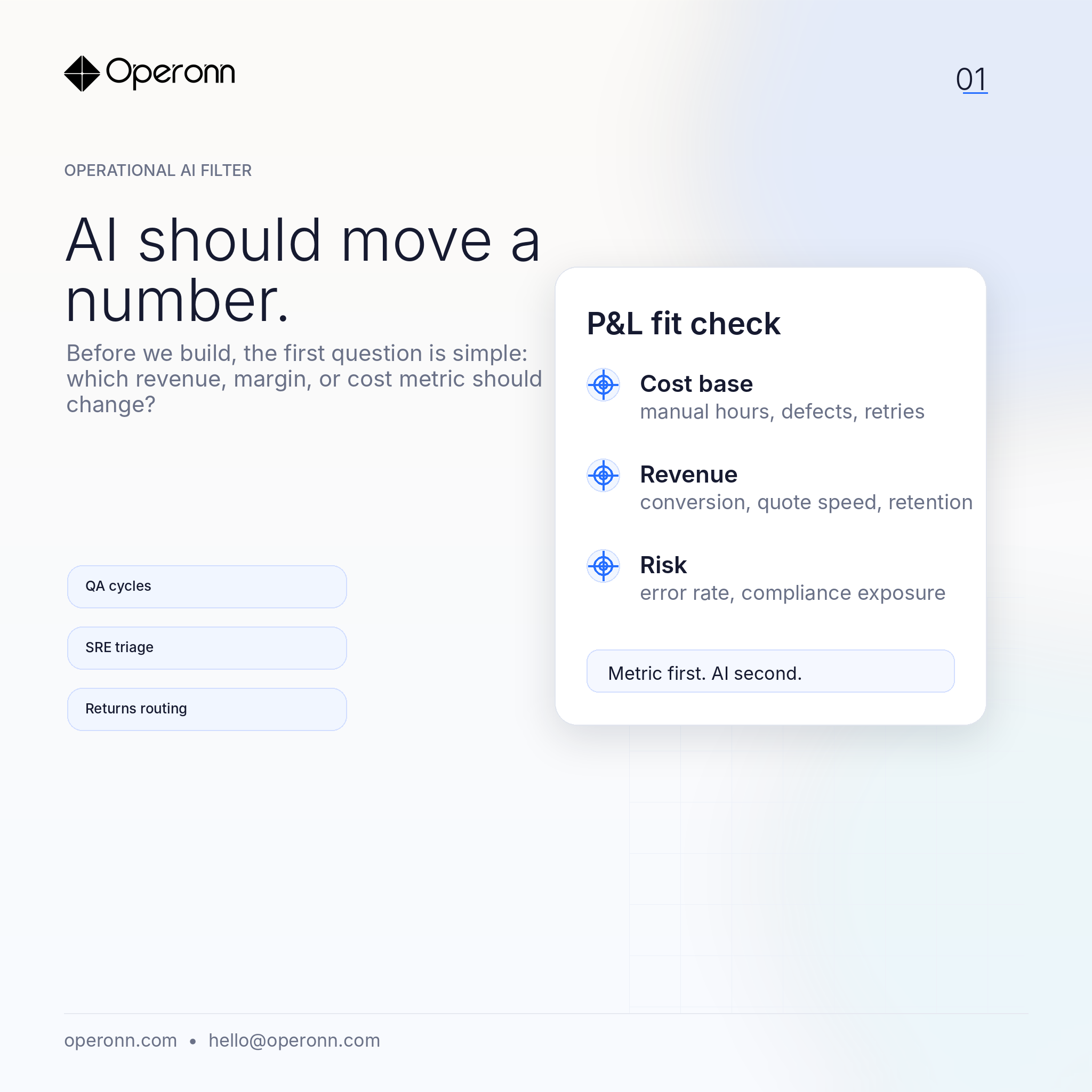

AI should move a number

Before we build, the first question is simple: which revenue, margin, or cost metric should change?

We write occasionally, when something we've shipped teaches us a lesson worth keeping. No content calendar. No newsletter funnel.

Before we build, the first question is simple: which revenue, margin, or cost metric should change?

For bounded operational tasks, local inference can reduce latency, cost, and data-governance friction.

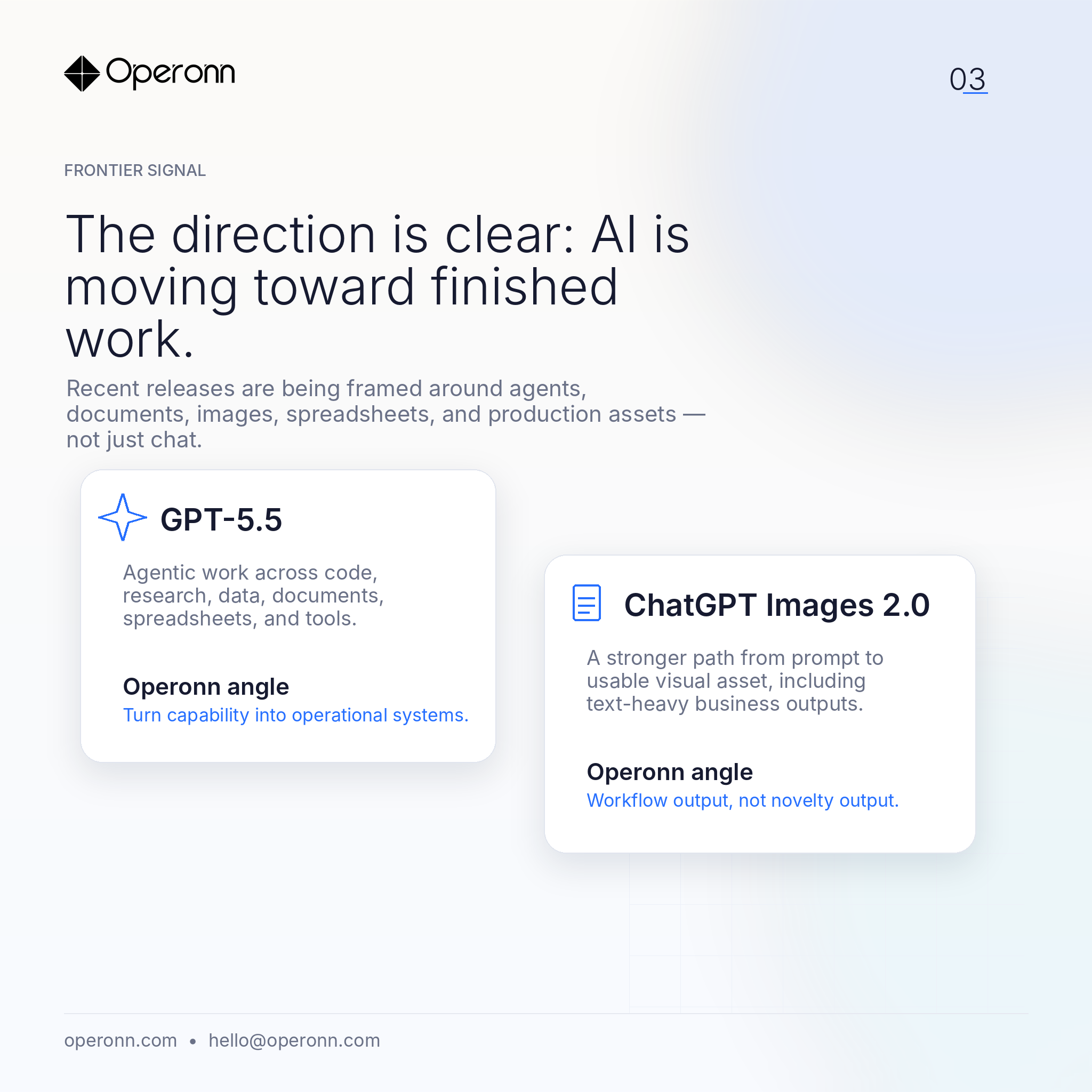

Recent releases are framed around agents, documents, images, and production assets — not just chat.

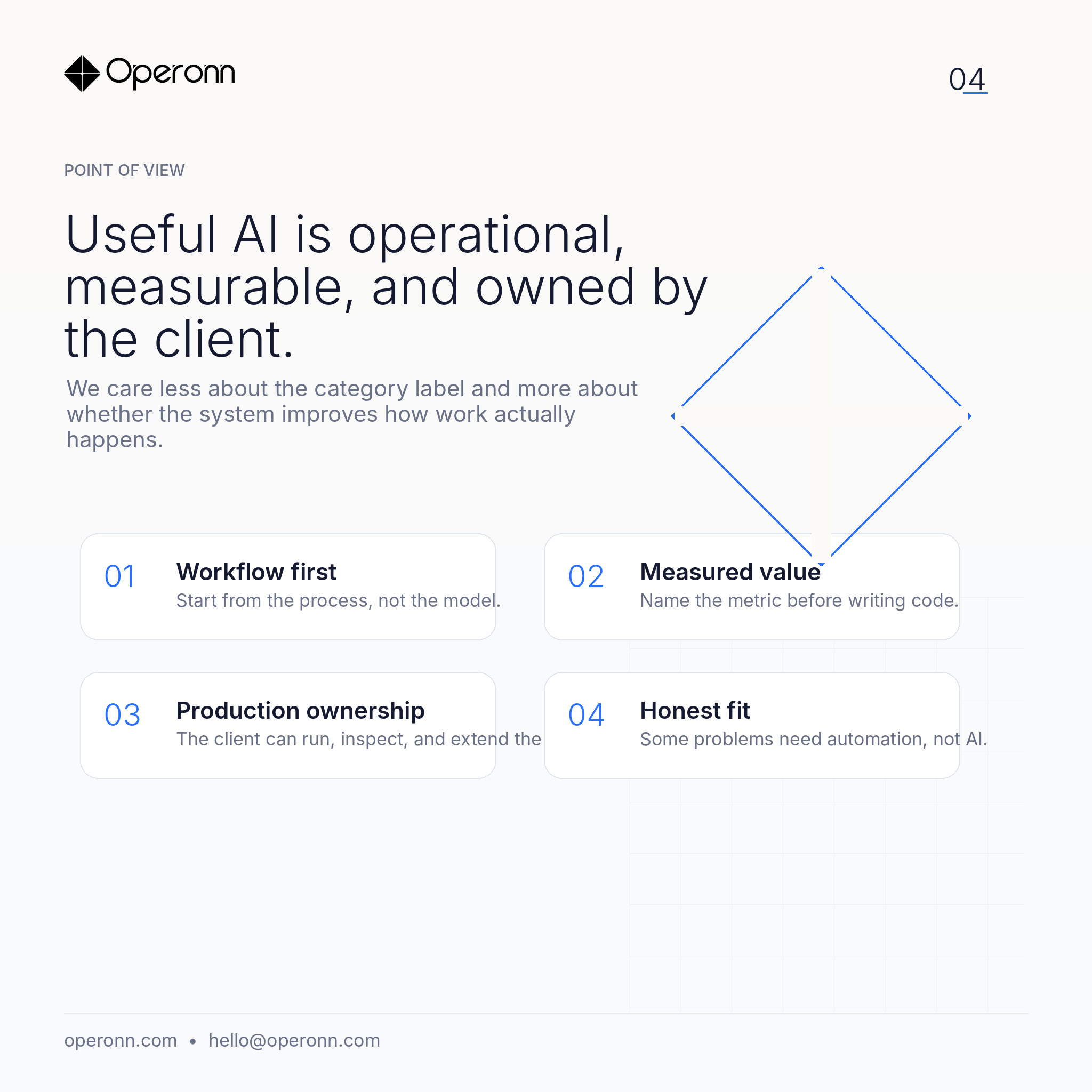

AI should be operational, measurable, and owned by the client. Sometimes the right answer is a simpler automation layer.

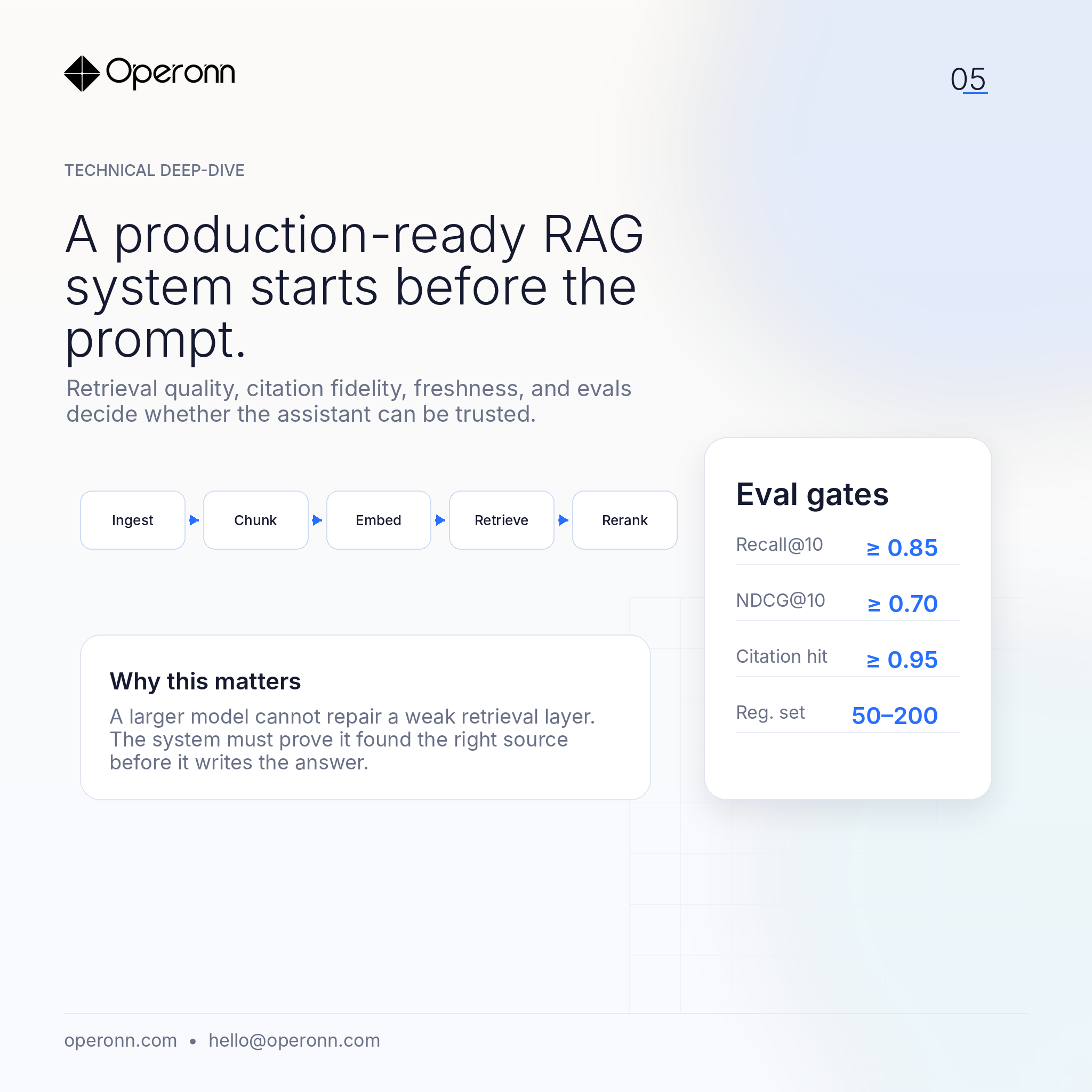

The model is the final step. The real work is in ingestion, chunking, hybrid retrieval, reranking, citation fidelity, and evaluation.

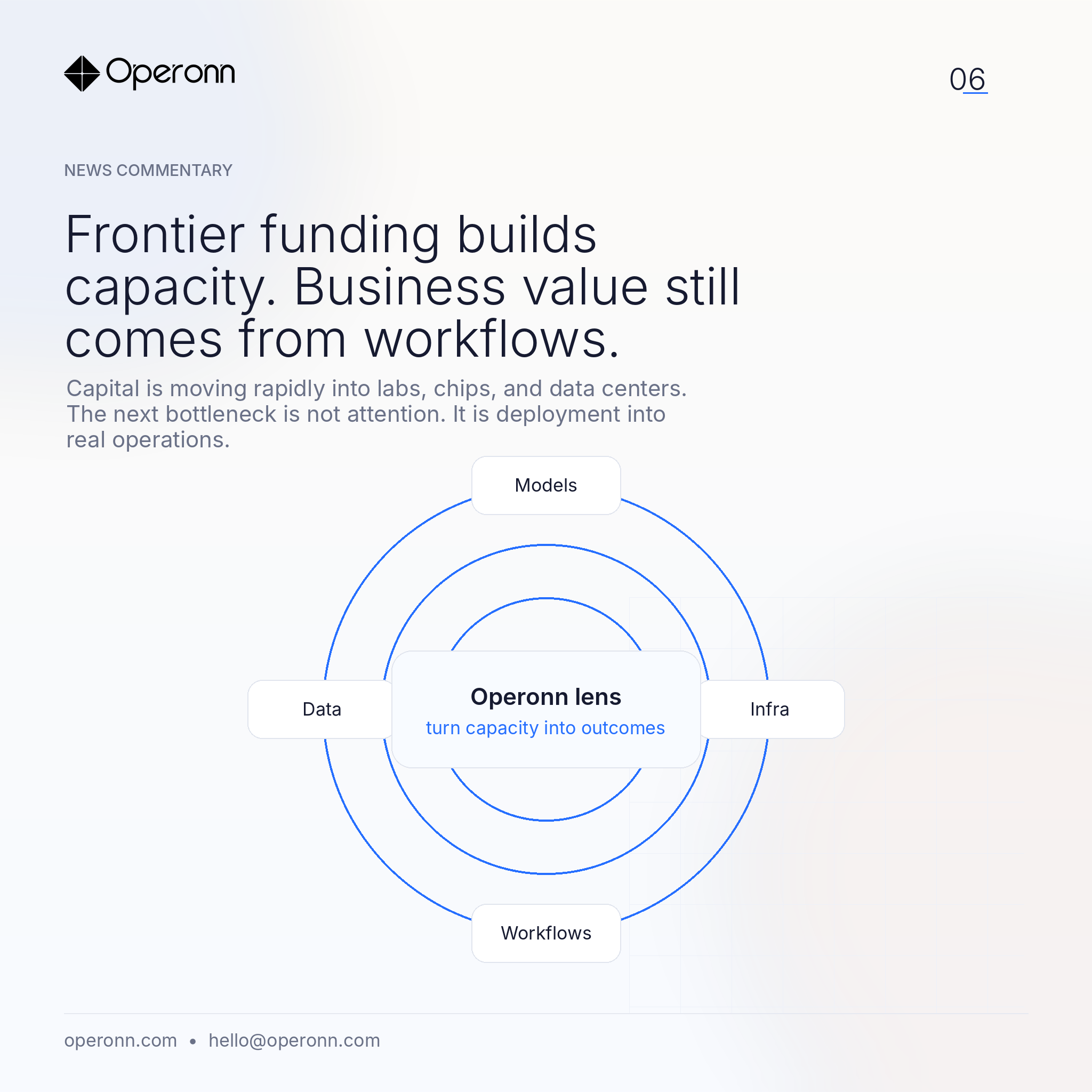

Frontier funding is accelerating. But adoption still arrives through workflows, not through compute.

Latency, turn-taking, and graceful interruption decide whether callers trust a voice AI. A checklist from production voice builds.

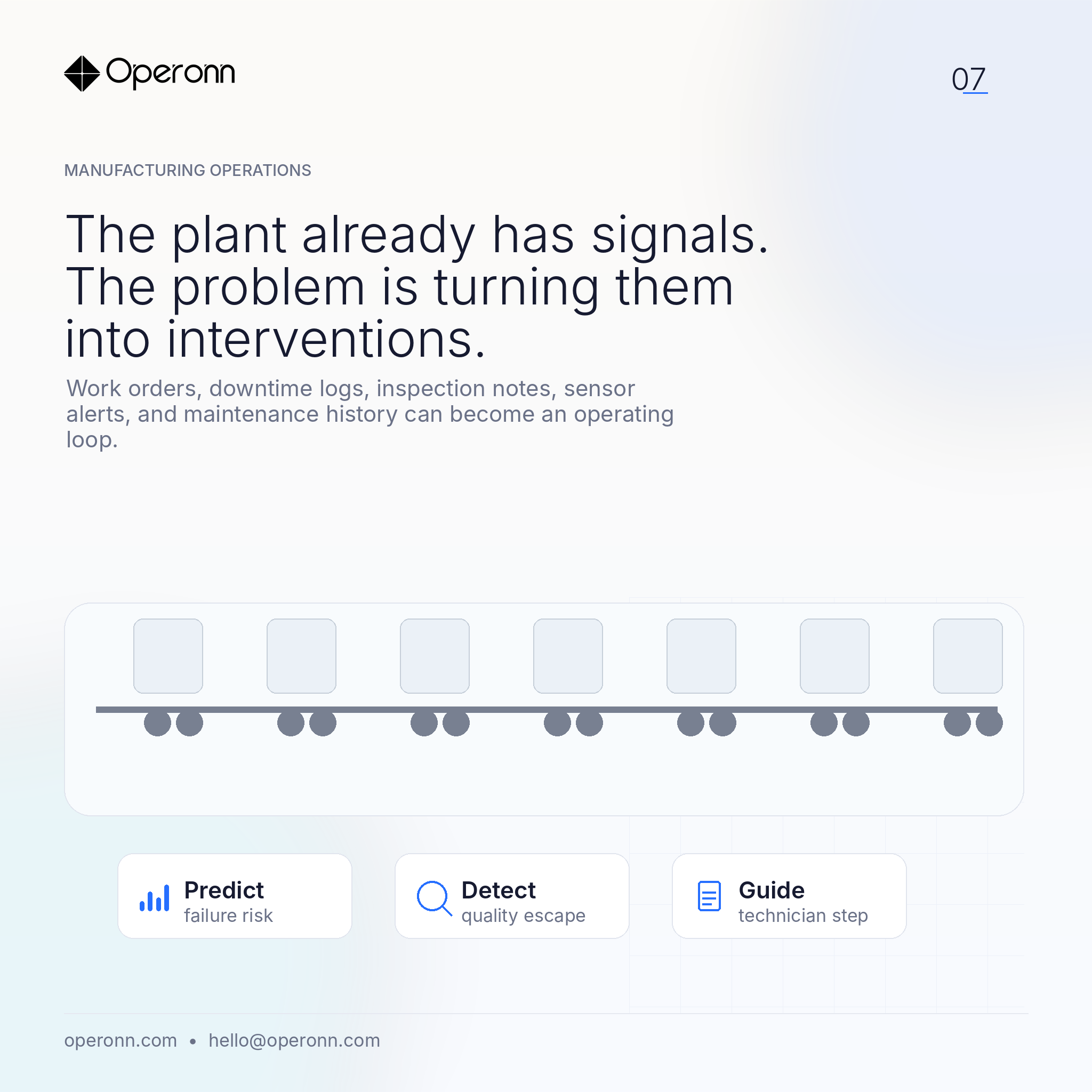

Work orders, downtime logs, and machine alerts already contain the signal. The gap is between signal and intervention.

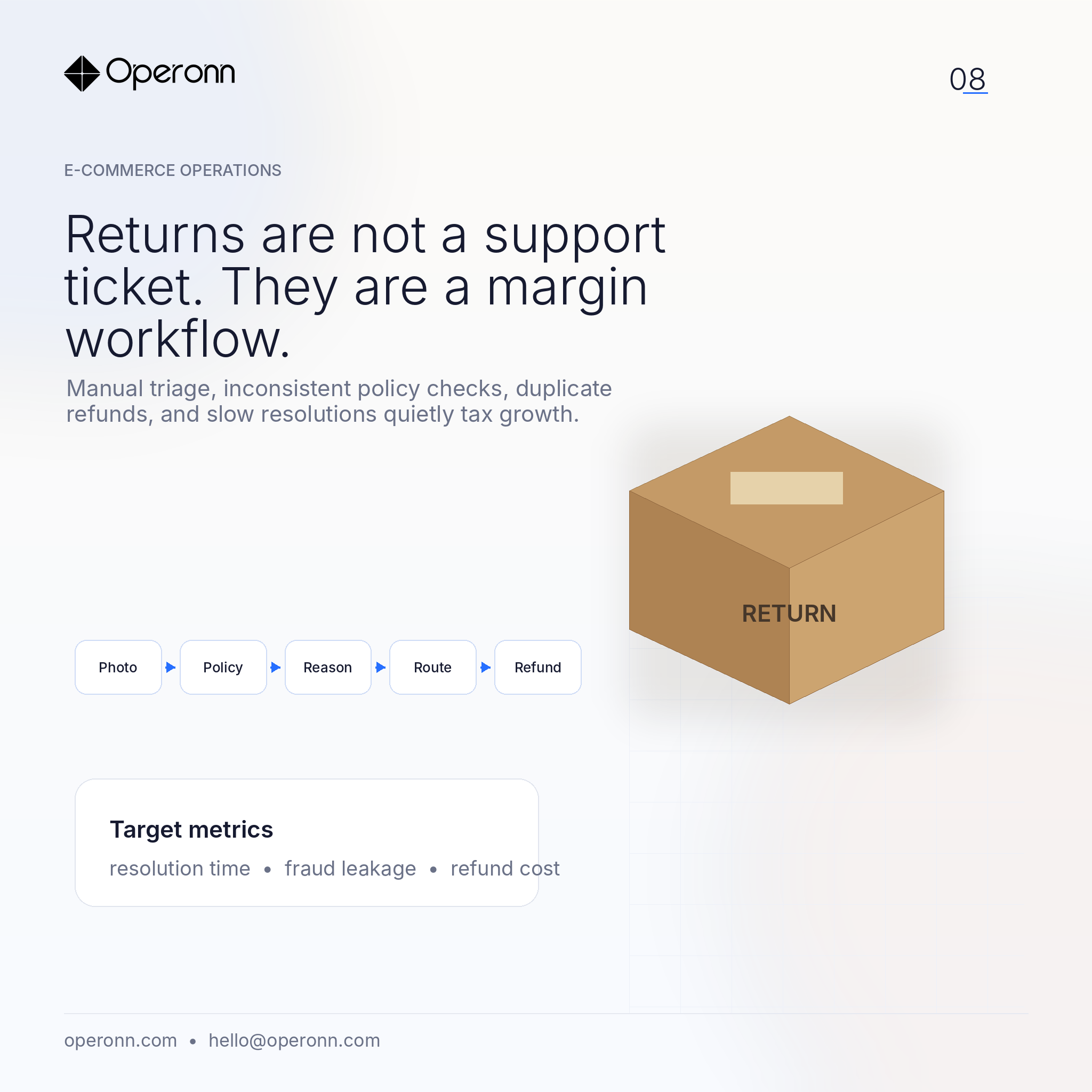

Manual reviews, duplicate refunds, policy exceptions, and unclear product photos all leak cost. Bounded AI fits this workflow well.

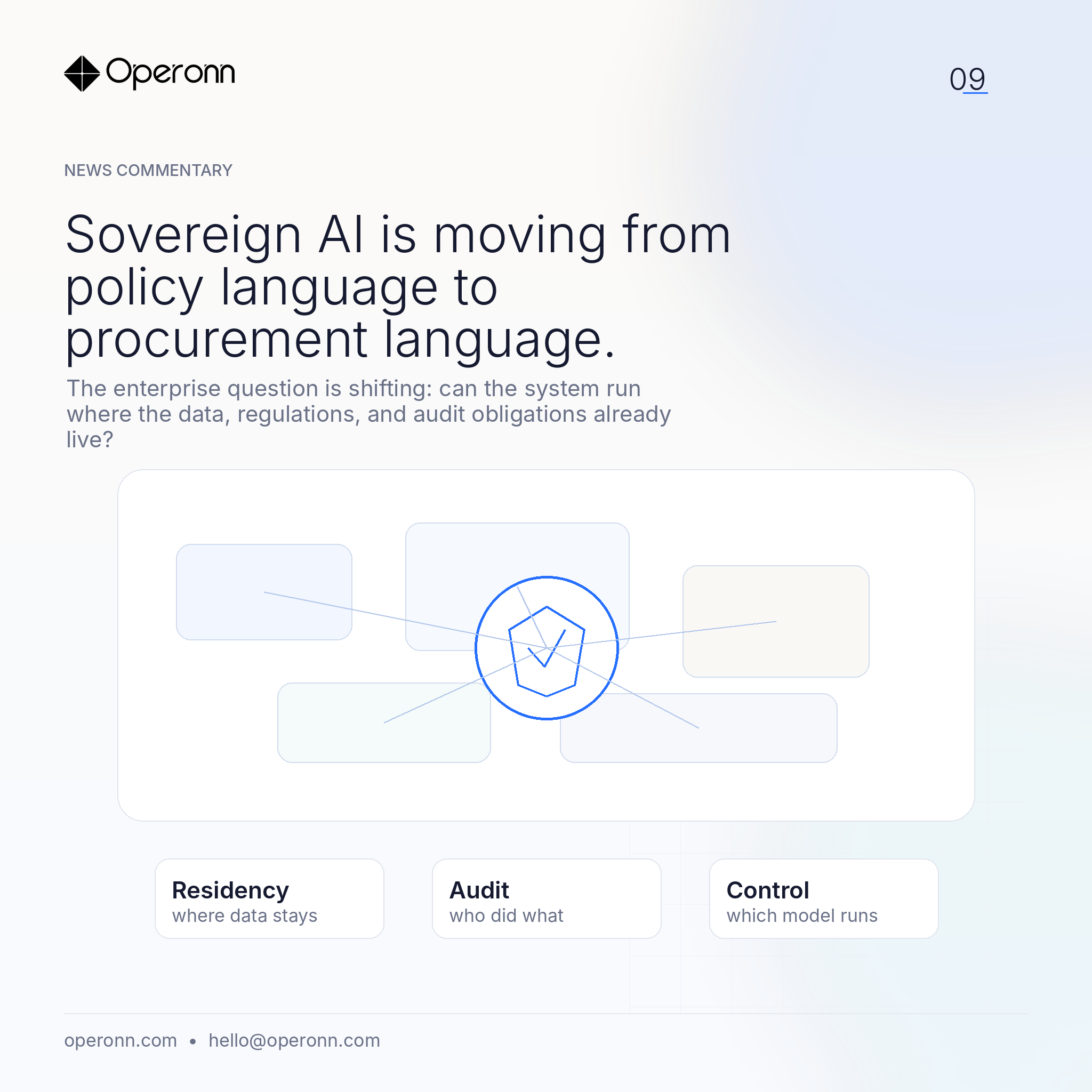

The enterprise question is shifting: can the system run where the data, regulations, and audit obligations already live?

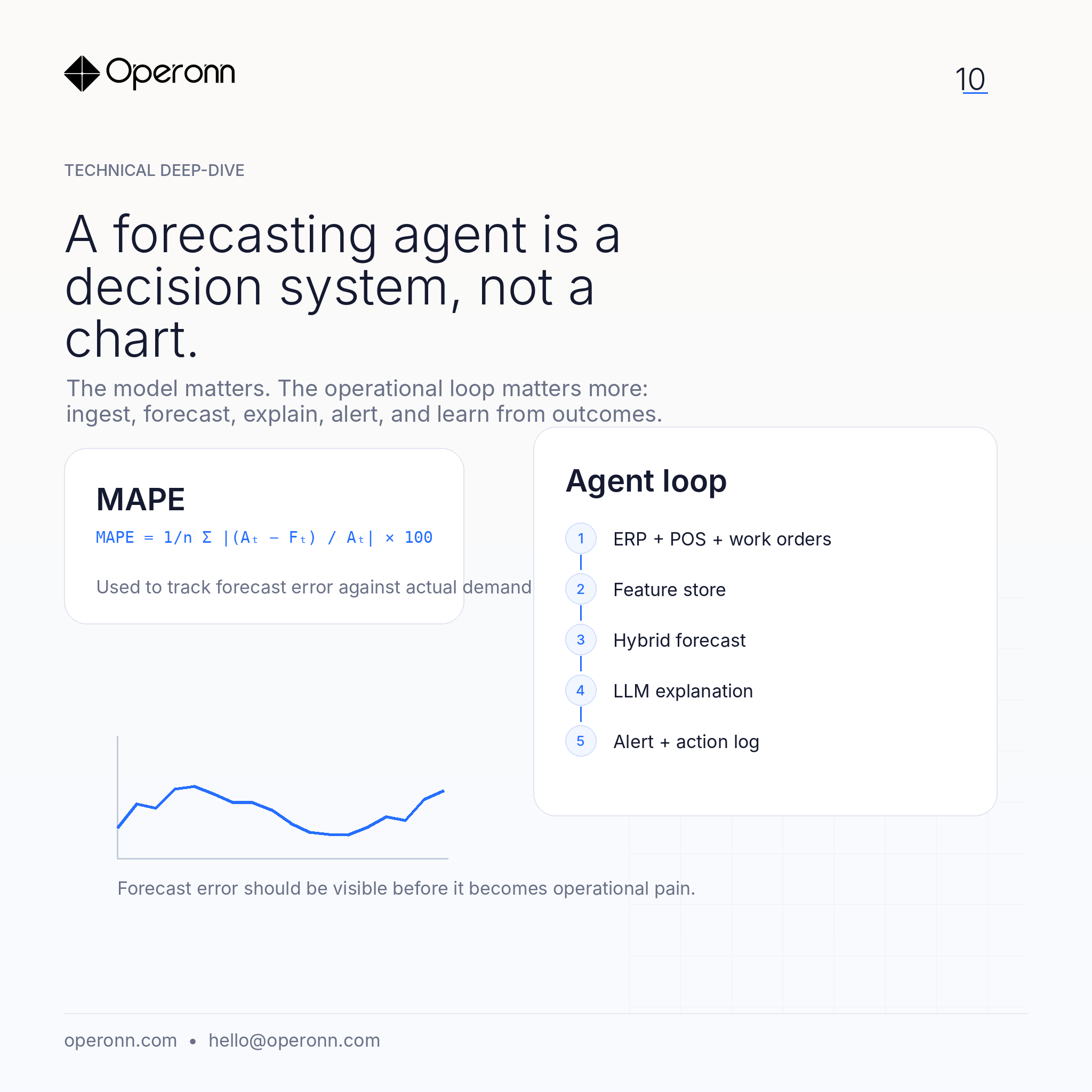

The model matters. The operational loop matters more: ingest, forecast, explain, alert, and learn from outcomes.

If you can't reproduce yesterday's quality today, you don't have a system — you have a coincidence.

When to retrieve, when to fine-tune, and when to do both. A decision framework from shipped enterprise systems.

Most AI projects fail at retrieval, not generation. Notes from a year of shipping RAG systems that have to be right.

Candidate evaluation systems have the highest bar for fairness and auditability of any AI use case. What our eval stack actually looks like.

The interesting ones become posts later — with permission.

hello@operonn.com →